You know that feeling when you're reading a paper and it references "the seminal work on attention mechanisms" but doesn't name it? Or when you want to trace how a method evolved but end up clicking through 15 citations that each reference 40 more?

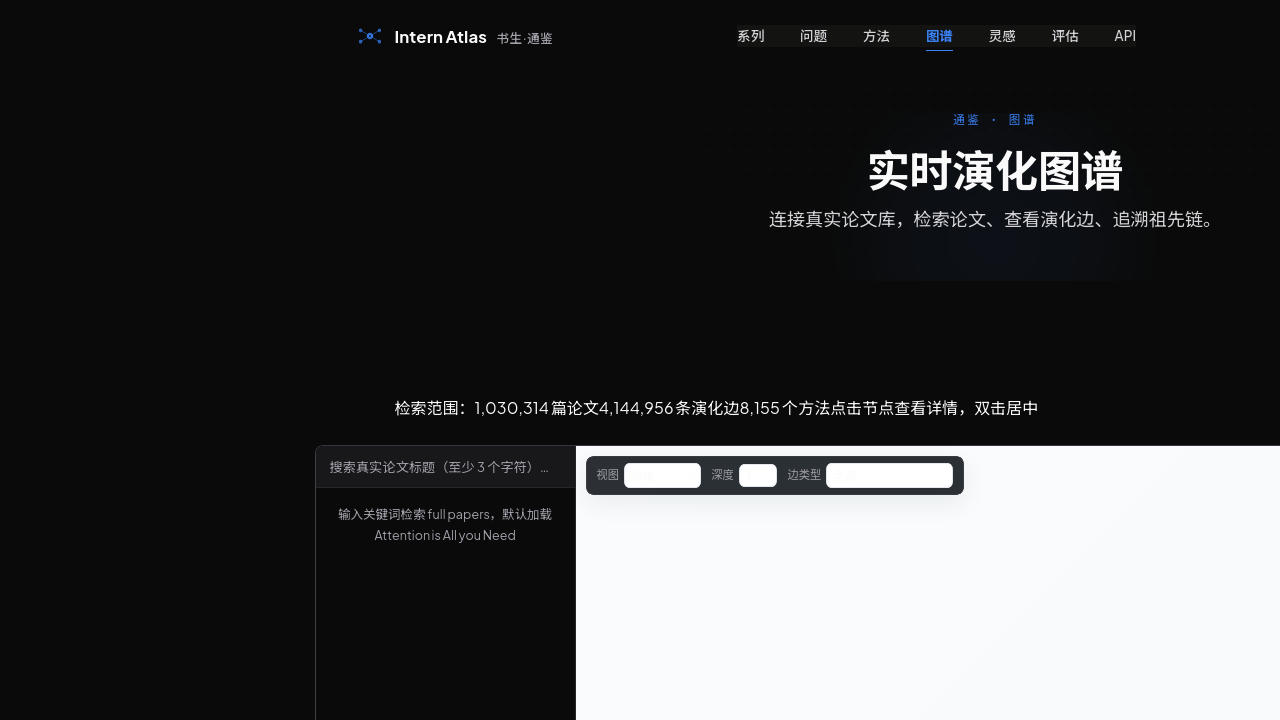

A Shanghai AI Laboratory team just built something that solves this. They converted 1,030,314 AI papers spanning 60 years into an explicit, machine-readable graph of how methods emerge, adapt, and build on each other. Not citations—method relationships. With evidence chains.

What They Built

Intern-Atlas is a method-centric evolution graph. The formal definition is G = (V, E, τ, ρ) where:

| Component | Count |

|---|---|

| Papers indexed | 1,030,314 |

| Canonical method nodes | 8,155 |

| Method aliases | 9,545 |

| Semantic edges | 9,410,201 |

| Temporal span | 60 years (1965-2025) |

Each edge has a semantic type—not just "cites" but what the relationship actually means:

| Edge Type | Meaning |

|---|---|

| extends | Direct extension of method |

| improves | Enhancement of existing approach |

| replaces | Replacement of deprecated method |

| adapts | Adaptation to new domain/task |

| uses_component | Component reuse |

| compares | Comparative analysis |

| background | Contextual relationship |

The key innovation: every edge carries a four-field evidence record. Bottleneck (the problem being addressed), Mechanism (the solution approach), Trade-off (the cost/limitation), and Confidence score. You're not just seeing that BERT "extends" Transformer—you're seeing what bottleneck BERT solved and what trade-off it introduced.

The Algorithm

They built a Self-Guided Temporal Monte Carlo Tree Search (SGT-MCTS) for tracing evolution chains. The selection rule balances exploitation (following high-confidence paths) and exploration (visiting under-explored branches) while enforcing temporal coherence.

The algorithm improved node recall by 39.9 percentage points over beam search baselines when reconstructing lineage chains. That's the difference between missing half the evolution story and catching almost all of it.

What You Can Query

Three API endpoints:

| Endpoint | Function |

|---|---|

/v1/query |

Retrieve subgraph by concept |

/v1/trace |

Trace evolution chain |

/v1/node |

Get method metadata, bottlenecks, relationships |

Example trace: Mamba → Linear Attention → Scaled Dot-Product Attention. Each node has metadata: aliases, year, paper ID, bottleneck solved, bottleneck remaining, parent method.

Benchmarks

The quality metrics are strong:

| Metric | Score |

|---|---|

| Node Match Ratio | 91.0% |

| Path Semantic Correctness | 92.0% |

For idea evaluation—using the graph to judge whether a proposed research direction is novel and valid—the system achieved 0.81 correlation with human experts. That's 23 percentage points above LLM-as-judge baselines.

Why This Matters

Existing tools stop at document granularity. Semantic Scholar gives you TL;DR summaries. Connected Papers shows citation networks. Google Scholar tracks document citations. None of them capture method-level relationships with evidence.

The team explicitly identified four gaps they're filling:

- Information Loss — LLM parameters are biased snapshots; rare method transitions disappear

- Unknown Unknowns — "Nobody tried" vs "Tried but failed" are indistinguishable

- No Topology — "A optimized B's efficiency at accuracy cost" relationships aren't recorded anywhere

- Flat Retrieval — Current tools stop at paper-level matching

The dataset is open. The graph is queryable. The infrastructure is built for AI research agents—not human browsing.

Community Reaction

The HuggingFace page shows 37 upvotes as of May 4, with comments describing it as a "fascinating" and "timely" contribution. The timing is notable: Papers With Code was archived in April 2026 (the domain now redirects to HuggingFace Trending Papers). The 9,327 benchmark leaderboards and 79,817 paper-to-code linkages are no longer served canonically. Community has rescued historical data as JSON dumps, but there's now a gap in research infrastructure.

Intern-Atlas isn't trying to fill that gap directly—it's building something different. A foundational data layer for AI agents, similar to how Protein Data Bank enabled AlphaFold or ImageNet enabled modern computer vision.

Sources

https://arxiv.org/abs/2604.28158 https://arxiv.org/pdf/2604.28158 https://huggingface.co/papers/2604.28158 https://huggingface.co/datasets/OpenRaiser/Intern-Atlas https://intern-atlas.opendatalab.org.cn/ https://huggingface.co/OpenRaiser https://www.themoonlight.io/review/intern-atlas-a-methodological-evolution-graph-as-research-infrastructure-for-ai-scientists https://news.ycombinator.com/item?id=42913251 https://www.reddit.com/r/MachineLearning/comments/1aml3w4/d_what_are_your_favorite_tools_for_research/ https://www.semanticscholar.org/ https://openalex.org/

So What

The 14-axis bottleneck taxonomy is the part I'm uncertain about. Computational complexity, memory efficiency, parallelization, accuracy, generalization, scalability, data efficiency, training stability, inference speed, expressiveness, simplicity, robustness, hyperparameter sensitivity, training complexity. That's a lot of axes. Does every research contribution fit cleanly into one of these? What about contributions that shift the bottleneck from one axis to another without "solving" anything?

The temporal coherence calibration is also post-2015 heavy. Most AI papers in the corpus are recent. The 1965-2010 tail might have sparser coverage.

But the core idea—converting implicit method relationships into explicit, queryable edges with evidence—is something the field has needed for years. If AI research agents are going to do anything useful beyond generating plausible-looking papers, they need this kind of infrastructure. Parameter counts and benchmark scores aren't enough. They need to know what actually worked, why it worked, and what it couldn't solve.