BAAI just dropped ExoActor—a framework that turns video generation models into a robot's imagination. Instead of hand-coding every joint trajectory, you just tell the robot what to do and it "imagines" the execution as a video, then physically performs it.

How It Works

ExoActor has a three-stage pipeline that decouples intent from execution:

Video Generation — A task instruction ("pick up the bottle") and an initial camera view are fed into video models like Kling 3. The system decomposes the instruction into atomic sub-actions (approach → bend → grasp → lift), then generates a third-person video of a human executing those steps.

Motion Estimation — The generated video is analyzed by GENMO (whole-body) and WiLoR (hands) to extract 3D poses, trajectories, and hand configurations frame by frame.

Motion Execution — The extracted motion is fed into a SONIC controller running on a Unitree G1 humanoid robot. The robot physically reproduces the "imagined" demonstration.

The clever trick is an embodiment transfer step. Video models are trained on humans, not robots. So ExoActor first converts the robot's observation into a human-like reference frame using Nano Banana Pro (Gemini 3.1 Pro) — preserving scene geometry while abstracting away the robot's morphology.

Benchmarks & Performance

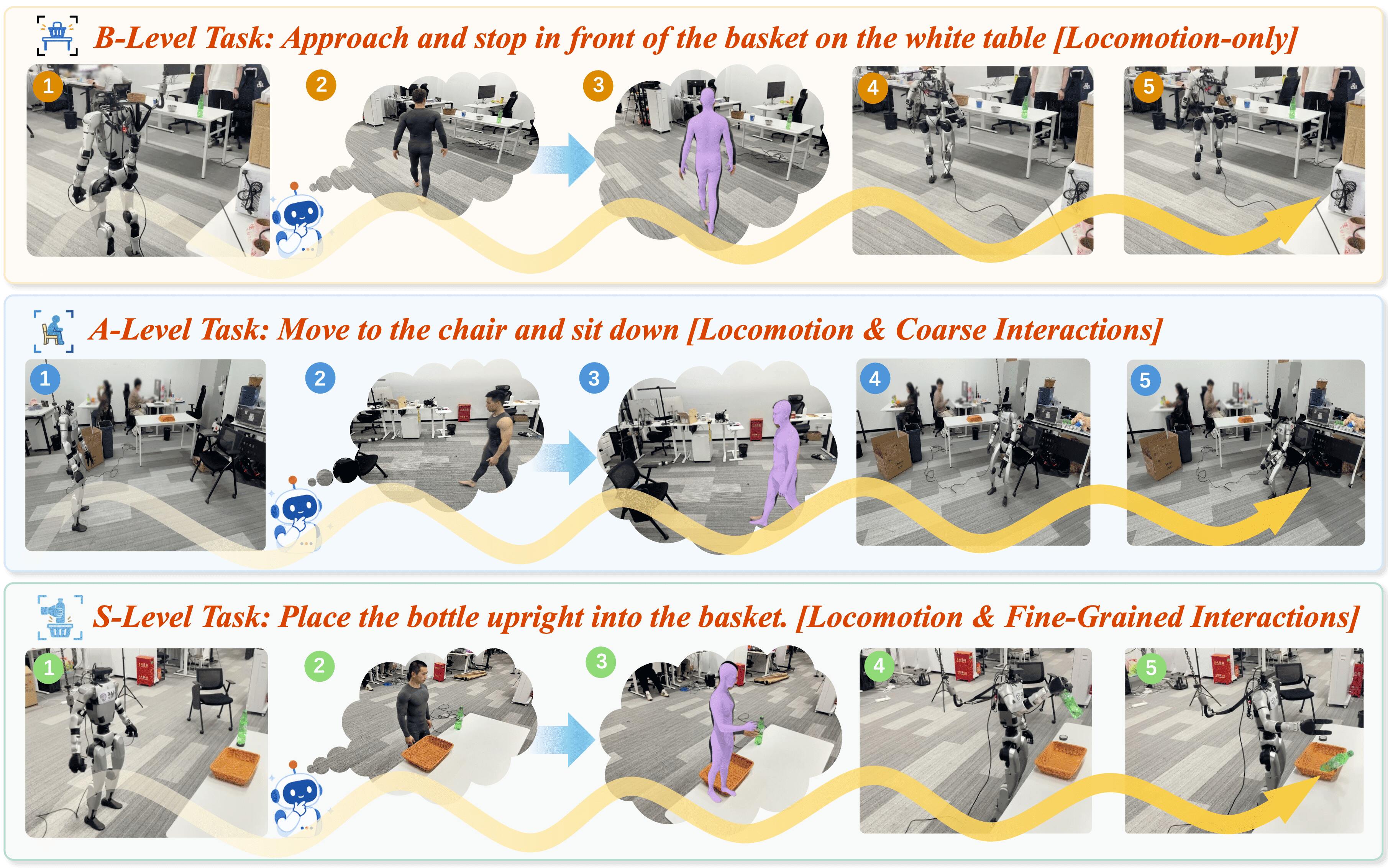

ExoActor was validated on three difficulty tiers:

| Level | Type | Examples |

|---|---|---|

| B (Easy) | Locomotion & navigation | Walking around obstacles, goal-directed navigation |

| A (Moderate) | Coarse interaction | Sweeping items off a table, sitting on a chair |

| S (Challenging) | Fine-grained manipulation | Picking up a bottle and placing it upright in a basket |

Latency breakdown per second of video:

| Module | Time (s) |

|---|---|

| Embodiment transfer | 10.7 |

| Task decomposition | 2.5 |

| Video generation | 13.2 |

| Whole-body motion estimation | 2.9 |

| Hand motion estimation | 16.4 |

Hand motion estimation is the bottleneck, taking 16.4 seconds per second of video. The current pipeline is entirely offline — you generate the full video before the robot moves.

What Makes It Different

"The uncomfortable part is that direct retargeting of human motion to a robot often introduces spatial errors. Just feeding the estimated motion directly into the controller preserves geometric fidelity better."

This insight from the paper is worth sitting with. Most approaches try to map human joint angles to robot equivalents (retargeting). ExoActor skips that entirely — it estimates motion from the generated video and lets the controller figure out the physical execution. The result is better spatial alignment for tasks that need precise positioning.

ExoActor also outperformed traditional RL and imitation learning baselines in interaction-rich scenarios. The video generation model implicitly encodes interaction dynamics that are nearly impossible to capture through conventional supervision.

Competitor comparison:

| Method | Approach | Key Limitation |

|---|---|---|

| ExoActor | Generated video → motion estimation → execution | Offline, open-loop |

| VideoMimic | Visual imitation from real video | Needs real demos |

| X-Humanoid | Human→humanoid video editing | Focuses on data generation, not control |

| EgoActor | VLM-based locomotion prediction | Same lab, different paradigm |

Limitations (There Are Several)

"Current video models prioritize visual plausibility over physical laws."

The generated videos look convincing but hallucinate contact dynamics. The robot might "see" itself grasping an object in the generated video, then fail because the physics don't actually check out.

Other issues:

- Open-loop execution: No real-time feedback. The robot commits to the generated plan.

- Wrist orientation: Fine-grained wrist rotations (vertical vs. horizontal grasps) are unreliable from monocular video.

- Latency: 45+ seconds of processing per second of action makes real-time impossible.

The authors acknowledge these openly and point to "streaming task imagination" — generating short video segments and executing them concurrently — as the path forward.

The Bigger Picture

BAAI is building a family of related work. ExoActor pairs with EgoActor (VLM-based locomotion prediction) from the same lab. The shared goal is moving humanoid control away from task-specific training and toward generative, generalizable behavior.

Video generation models hold an enormous amount of implicit world knowledge — how objects behave, how humans interact with them, what sequences of actions lead to outcomes. ExoActor is one of the first frameworks to channel that knowledge directly into physical execution.

It's offline, it's slow, and it makes physics mistakes. But the direction is right. A robot that can "imagine" its actions before executing them beats a robot that needs 10,000 human demonstrations for every new task.

Sources

https://arxiv.org/html/2604.27711v1 https://baai-agents.github.io/ExoActor/ https://huggingface.co/papers/2604.27711 https://arxiv.org/abs/2604.27711 https://baai-agents.github.io/RoboNoid/EgoActor/