What It Is

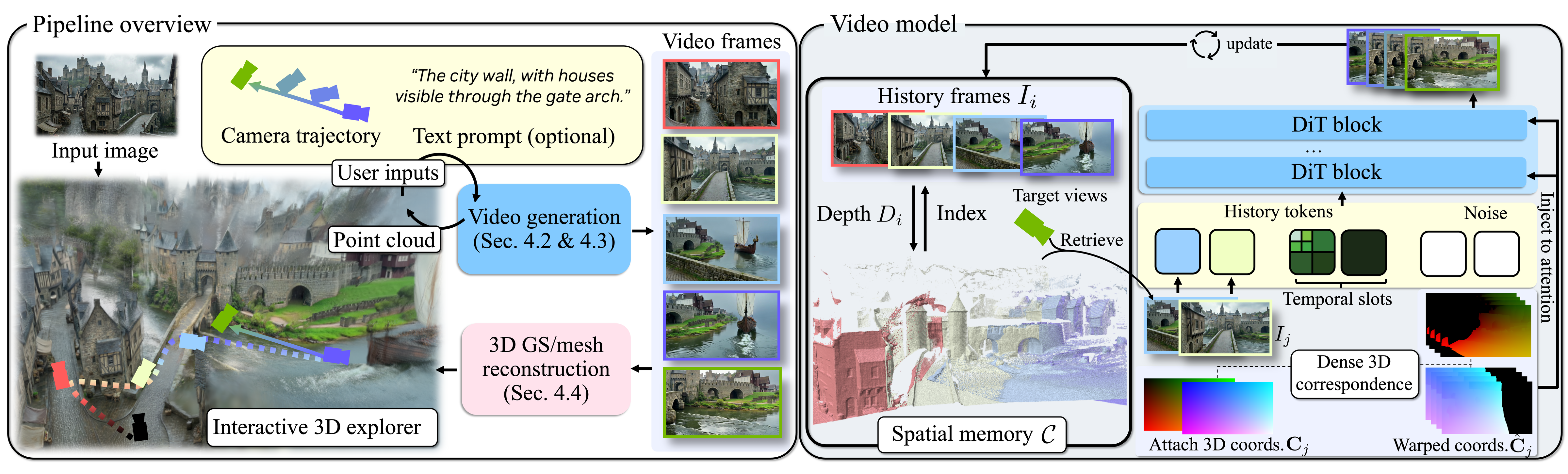

NVIDIA's Lyra 2.0 generates persistent, explorable 3D worlds from single images using a novel "generative reconstruction" paradigm—video generation followed by 3D lifting via feed-forward reconstruction.

TL;DR: Generate camera-controlled walkthrough videos, then lift them to 3D via feed-forward reconstruction. Per-frame geometry handles spatial forgetting. Self-augmented training corrects temporal drifting.

The Core Problem

Scaling to large, complex environments requires 3D-consistent video generation over long camera trajectories with large viewpoint changes and location revisits. Current video models degrade quickly due to:

| Problem | Description |

|---|---|

| Spatial Forgetting | Previously observed regions fall outside temporal context, forcing hallucination when revisited |

| Temporal Drifting | Autoregressive generation accumulates synthesis errors, distorting scene appearance and geometry |

The Solution

Spatial Forgetting → Per-Frame Geometry Routing

Lyra maintains per-frame 3D geometry for information routing:

- Retrieve relevant past frames with maximal visibility of target views

- Establish dense 3D correspondences via canonical coordinate warping

- Inject warped information into DiT via attention

- Rely on generative prior for appearance synthesis, not geometry hallucination

Temporal Drifting → Self-Augmented Training

Expose the model to its own degraded outputs during training:

- Teach the model to correct drift rather than propagate it

- Use compressed temporal history alongside spatial memory

Technical Specs

| Component | Specification |

|---|---|

| Video Diffusion Model | Wan2.1-based DiT, ~14B parameters |

| 3DGS Decoder | Augments RGB decoder, supervised by RGB output |

| Spatial Memory | Accumulated point clouds for information routing |

| Interactive Explorer | GUI for planning camera trajectories |

| Output Format | 3D Gaussian Splatting, exportable meshes |

Competitor Comparison

| Method | Input | Long-Horizon | Scene Revisits | Isaac Sim |

|---|---|---|---|---|

| Lyra 2.0 | Single image | Yes (spatial memory) | Yes (per-frame routing) | Direct export |

| World Labs Marble | Single image | Claims persistence | Yes | Browser-based |

| 4D Gaussian Splatting | Multi-view video | Limited | N/A | Varies |

| WonderWorld | Video/image | Limited | No | No |

Key Differentiator

Lyra 2.0 requires no real multi-view training data. The 3DGS decoder is trained purely with synthetic data from video diffusion models via self-distillation.

This is the first method to robustly handle long-horizon generation with scene revisits while maintaining 3D consistency.

Applications

| Domain | Use Case |

|---|---|

| Embodied AI/Robotics | Simulation environments for robot training via Isaac Sim |

| Autonomous Vehicles | Unlimited driving scenario generation |

| Gaming/VFX | Virtual environment creation, rapid prototyping |

| Industrial AI | Digital twin environments before deployment |

Community Reception

Hacker News (8 points):

"It looks very good. I wish there was an interactive demo." — smusamashah

r/GaussianSplatting: Positive technical reception in specialized 3D community

r/singularity: Related NVIDIA 3D generation posts (GEN3C, EdgeRunner) received 196-910 upvotes

Model Availability

| Resource | URL |

|---|---|

| Hugging Face Model | nvidia/Lyra-2.0 (252 downloads, 251 likes) |

| GitHub | nv-tlabs/lyra (1,667 stars) |

| License | NVIDIA Internal Scientific Research and Development Model License (non-commercial) |

Bottom Line

Lyra 2.0 represents a paradigm shift in 3D world creation. By solving spatial forgetting and temporal drifting—the two fundamental failure modes of long-horizon video generation—NVIDIA has created the first framework for generating persistent, explorable 3D worlds that can be directly exported to Isaac Sim for embodied AI simulation.

For robotics training pipelines, this could be transformative.