What happens when you train a language model on 260 billion tokens of text—all from before 1931?

Nick Levine, David Duvenaud, and Alec Radford (yes, the GPT-2/GPT-3 author) just released Talkie, a 13-billion parameter model with a knowledge cutoff of December 31, 1930. It's the largest "vintage" language model to date, and it does something genuinely novel: it enables clean experiments on how LLMs generalize versus memorize.

The Setup

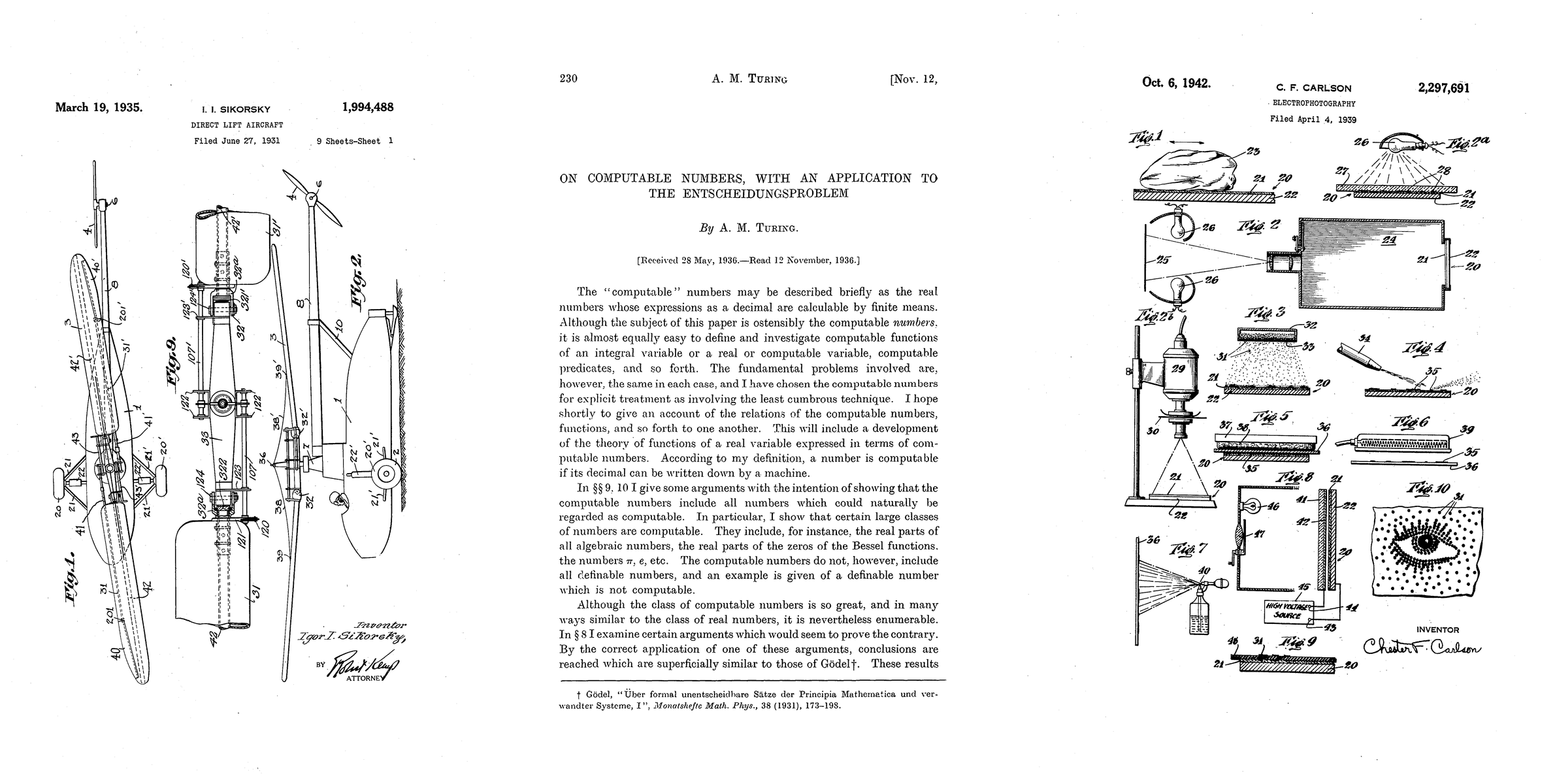

Talkie was trained on books, newspapers, scientific journals, patents, and case law—all public domain materials that entered US public domain by end of 1930. The corpus spans over 260 billion tokens sourced from the Institutional Data Initiative, Internet Archive, and Common Pile.

They built three variants:

talkie-1930-13b-base: The vintage modeltalkie-1930-13b-it: Instruction-tuned using only vintage reference materials (etiquette manuals, letter-writing guides, cookbooks, encyclopedias from the era)talkie-web-13b-base: A "modern twin" trained on FineWeb with identical compute for controlled comparisons

The Findings

The vintage model underperforms its modern twin on standard benchmarks. But when researchers filtered out anachronistic questions, the performance gap halved. The models showed similar performance on core language understanding and numeracy tasks.

More surprising: the vintage model can write Python code despite never having seen digital computers. On HumanEval, it produced correct solutions—though limited to simple one-line programs and modifications to in-context examples. It successfully implemented a rotation cipher decoding function by understanding inverse relationships, not by memorizing code patterns.

The Temporal Leak Problem

The filtering isn't perfect. When asked about 1936, the model referenced legislation from 1935 and 1936, revealing post-hoc editorial insertions in the training corpus. The team built a document-level n-gram-based anachronism classifier to detect these issues.

OCR Quality Matters

Vintage models trained on OCR'd text showed 30% learning efficiency versus human-transcribed text. Regex cleaning brought it to 70%. The team is developing a "vintage OCR" system to improve data quality for future iterations.

Future Prediction Evaluation

The team tested the model on ~5,000 NYT "On This Day" historical event descriptions. Surprisingness (bits per byte) increased sharply after the 1930 cutoff, peaked in the 1950s-1960s, then plateaued. This demonstrates the model can distinguish between known and unknown historical periods.

What This Means for LLM Research

This is contamination-free by construction. The model cannot have memorized benchmark answers from post-1931. It enables pure generalization experiments—the kind Demis Hassabis asked about: could a 1911-cutoff model discover General Relativity?

All modern LLMs are trained on web data, directly or through distillation. Talkie proves different training data shapes different model behaviors and dispositions. The next phase: a GPT-3.5-level model trained on a trillion-plus token vintage corpus.

Technical Details

- Parameters: 13B

- Training tokens: 260B

- License: Apache 2.0

- Requires: ~28 GB VRAM for bfloat16 inference

- Live demo: talkie-lm.com/chat

Sources https://talkie-lm.com/introducing-talkie https://github.com/talkie-lm/talkie https://huggingface.co/talkie-lm/talkie-1930-13b-base https://huggingface.co/talkie-lm/talkie-1930-13b-it https://reddit.com/r/singularity/comments/1sxp4ha/talkie_a_13b_lm_trained_exclusively_on_pre1931/